Managing the Human–AI Organisation

Six capabilities leaders need as AI enters everyday work

TL;DR

If you eavesdrop on leadership meetings and boardroom discussions across industries today, you will hear a familiar question surface again and again: How should we use AI?

A year ago, that question belonged mostly to technology teams and a handful of companies experimenting at the frontier. In 2025, many organisations were still running pilots, building prototypes, and exploring what generative AI might be capable of. The technology was promising and occasionally impressive, but it had not yet become an everyday operational concern for most leaders.

In 2026, across industries and functions, leaders are waking up to both the potential and the risk of AI. Systems that once generated text or answered questions are beginning to assist real work—analysing data, reviewing documents, drafting communication, flagging risks, and in some cases recommending actions that influence operational decisions. There is a growing recognition that using AI well may be critical to the growth and survival of both careers and companies.

A friend of mine who works in the risk department of a large financial institution called recently after trying the latest version of Claude. Until recently he had been fairly dismissive of these systems. “The outputs were interesting,” he told me, “but unreliable.”

But the latest models, to his surprise, were far more capable. Some of the analysis, he said, read very much like the work of a junior analyst.

Then he paused and asked: if systems like these are already this capable today, where will they be in a few years? And more importantly, what will that mean for the way our organisations work?

These are the real questions emerging in leadership rooms today. Yet many of our discussions about AI are still framed primarily through a technology lens—model capabilities, tools, prompts, and infrastructure, or through a jobs replacement lens.

In my own conversations with business leaders across sectors, and through years of mentoring startups as part of the Google for Startups program, a different perspective keeps surfacing. It sounds obvious when stated plainly, but most organisations have not yet internalised it: integrating AI is fundamentally a management function, not a technology one.

Deploying AI will become easier. Managing its consequences will become harder.

As AI evolves from chat interfaces into systems that participate directly in workflows and decisions, leaders will increasingly have to manage how these systems interact with people, processes, and accountability structures. Deploying AI will become easier. Managing its consequences will become harder.

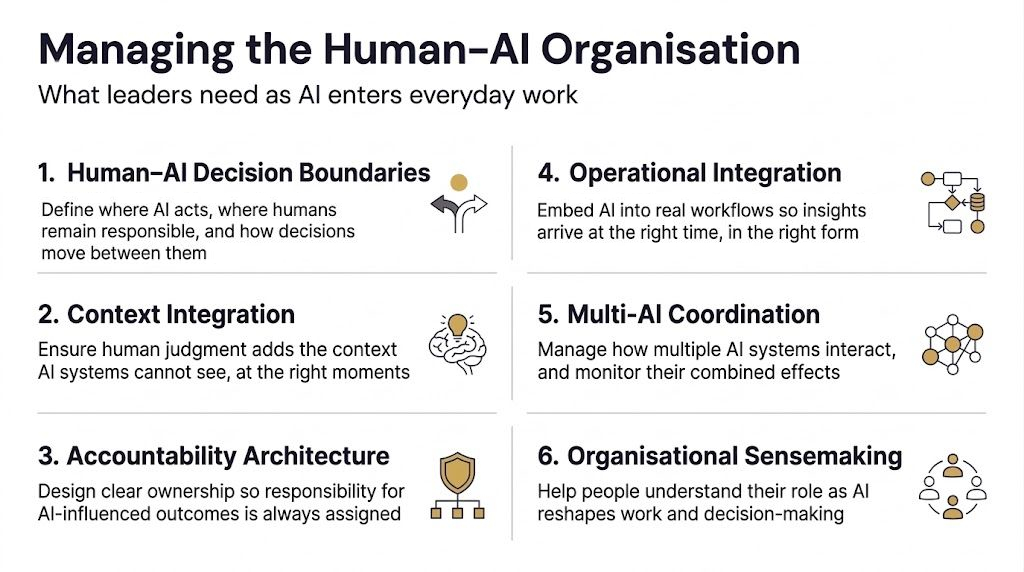

From these conversations, six leadership capabilities consistently emerge that determine whether AI strengthens decisions, or quietly undermines them.

Together, these capabilities form a practical model for managing the human-AI organisation.

The examples below are drawn from cases leaders and startup founders have shared with me. The situations are real, though the context has been simplified and details modified to make the dynamics clearer.

1. Defining Human-AI Decision Boundaries

Where should AI act, where must humans remain in control, and how should the two collaborate?

Much of the current debate about AI in organisations revolves around replacement. Will AI replace analysts, designers, or customer support teams?

The real question is how decision authority will be shared between humans and AI systems.

That framing misses the challenge leaders will actually face. The real question is not replacement. It is how decision authority will be shared between humans and AI systems.

As AI begins to participate in everyday workflows, leaders will have to decide where the system acts independently, where humans remain responsible, and where the two collaborate. These boundaries rarely emerge automatically. Recommendations quietly become decisions, and accountability becomes unclear.

Consider these cases:

Hidden bias in data

A lending AI began flagging certain surnames as higher risk based on historical default patterns. The model was not explicitly using religion or caste. By most technical definitions it even passed fairness checks.

But the underlying data was sparse, and patterns began to drift. A small business owner with a clean record found their loan application rejected because their surname resembled others associated with past defaults.

The model was technically “fair”. The outcome was not.

When AI learns past hiring bias

A recruitment AI was introduced to streamline interview scheduling. The system screened resumes, ranked candidates, and automatically assigned interview slots.

After a few months, a pattern began to emerge. Candidates from non-elite universities consistently received worse interview times—late evenings, inconvenient time zones, or shorter notice.

No one had programmed the system to do this. It had simply learned patterns from past hiring decisions.

Treating this as an edge case missed the point.

Most organisations already operate with clear escalation paths. Credit approvals move upward when risk thresholds are crossed. Risk flags trigger review by senior teams. Hiring decisions pass through multiple layers before an offer is made. These chains exist so that difficult decisions can move across roles until someone with the right authority makes the call.

Introducing AI complicates this structure. A model may generate a recommendation, another system may interpret or operationalise it, and the case may still require approval from several human roles before it escalates or converges. Decisions now travel across systems and people, often in ways the original process was never designed for.

At that point the real design questions begin to surface. Who ultimately takes responsibility for the outcome? Which role has the authority to override the system’s recommendation? What exactly counts as an edge case—and who decides when one has occurred? And once an edge case is identified, how should the organisation respond?

These questions matter because edge cases are precisely where automated systems struggle most. If escalation paths and human roles are not clearly defined, the system simply continues operating even when the outcome is wrong.

Designing those boundaries between systems, roles, and authority is the real work behind human-AI decision boundaries.

Even when those boundaries are clear, however, another limitation quickly becomes visible. AI systems lack context.

And that brings us to the second capability leaders must build.

2. Context Integration

How do we ensure human insight adds the context AI systems cannot see?

AI systems can process enormous amounts of information, but they still struggle to understand the circumstances in which decisions unfold. In most organisations, those circumstances matter as much as the data itself. Timing, relationships, reputational risk, internal dynamics, and external events often shape whether a decision is wise or misguided.

These signals rarely appear cleanly in datasets, yet experienced professionals rely on them constantly.

Consider these cases.

Miscalculated opportunism

A marketing team used an AI system to draft a campaign responding to a competitor’s pricing change. The model analysed historical campaigns and suggested an aggressive message highlighting the competitor’s weakness.

From a purely analytical standpoint, the recommendation was sound. But the competitor was simultaneously dealing with a product recall that had received significant public attention. Launching the campaign at that moment would have appeared opportunistic and risked damaging long-term brand trust.

A human leader reviewing the draft recognised the timing issue immediately and postponed the campaign.

Perspective loss

A legal team used an AI assistant to generate contract language for a long-standing client. The clause the system produced was technically correct and aligned with company policy.

However, the client had historically preferred simpler contracts and informal language. Previous negotiations had taken weeks precisely because of this issue. Sending the AI-generated version unchanged would likely have reopened those tensions.

The lawyer revised the clause before sending it.

In both situations, the AI system performed exactly as designed. It identified patterns and produced outputs consistent with its training data. What it could not see was the surrounding context—the history, relationships, and timing that experienced professionals routinely take into account.

This is why effective AI use depends on context integration. Systems generate signals; humans interpret them in light of circumstances the model cannot fully observe.

The challenge is designing workflows where context can enter the decision process at the right moment.

For leaders, the challenge is not simply encouraging employees to “use judgment”. It is designing workflows where context can enter the decision process at the right moment. Human review points, domain expertise, and institutional memory need to remain part of the system rather than being pushed out in the pursuit of efficiency.

Even when context is added, another challenge quickly emerges: Who ultimately owns the outcome?

3. Accountability Architecture

Who remains responsible when AI participates in decisions?

Once AI begins influencing decisions across an organisation, the question of responsibility becomes unavoidable. Systems may generate recommendations, prioritise actions, or even trigger workflows, but the outcomes still affect customers, employees, and partners. Someone has to remain accountable. This becomes complicated because AI systems often operate across functions. A model may be trained by one team, deployed by another, and used in decisions made by a third. When something goes wrong, it may be difficult to assign clear responsibilities on why this has happened.

Consider this case.

Incorrect flagging

During a trial run with a retail bank, a startup deploying a fraud-detection model began to notice an unexpected pattern. The system analysed spending behaviour and automatically flagged suspicious transactions, blocking certain payments until they could be reviewed. From a technical standpoint the model performed well. Fraud detection improved and simulated loss scenarios showed measurable gains. But another pattern began to emerge during testing. Legitimate transactions were also being blocked more frequently. International payments failed. Purchases made while travelling were flagged as suspicious. Customer service teams reviewing the pilot results began to imagine what this would look like at scale.

The issue exposed a tension that already existed within the organisation. One part of the bank focused on minimising fraud losses, while another was responsible for customer experience and retention. The model had optimised strongly for one side of that tension. The real question therefore was not whether the model worked, but who should decide where that balance lay.

These are governance questions rather than technical ones. Someone must decide what trade-offs the system is allowed to make, who monitors the outcomes, and who has the authority to intervene when those outcomes begin to drift. Without clear ownership, problems like this tend to fall between teams. The data science group may see the model as performing correctly, operations teams deal with the fallout, and leadership becomes involved only once customer dissatisfaction becomes visible.

This is why organisations need accountability architecture around AI systems. Models may assist decisions, but responsibility for their consequences must remain clearly assigned. Leaders need to define who owns the performance of the system, who monitors unintended effects, and who has the authority to pause or modify the model when necessary. Even when those structures are in place, another layer of complexity begins to emerge. AI systems rarely operate alone, and as organisations deploy more of them, their interactions start shaping outcomes in ways no single team anticipated.

4. Operational Integration

How should AI insights appear inside the flow of real work?

AI systems still have to fit into the everyday rhythm of work rather than ask people to adapt to them. In many organisations this is where adoption quietly breaks down. A model may generate accurate insights, but if those insights arrive at the wrong moment, in the wrong format, or to the wrong person, they simply go unused. The problem is not intelligence. It is integration.

Consider these cases.

Adapting to user preferences

A manufacturing company deployed an AI system to detect early signs of equipment failure. The model analysed sensor data from machines and produced alerts when patterns suggested an impending breakdown. Technically, the system worked well and identified anomalies much earlier than human supervisors typically could. But the alerts were delivered through a dashboard that required managers to check it manually. On the factory floor, supervisors spent most of their time walking the line rather than sitting at terminals, so many warnings went unnoticed. When the same signals were later delivered through mobile alerts during routine inspections, response times improved immediately. The intelligence had not changed. The way it entered the workflow had.

Information overload

In another organisation, an AI system generated detailed daily briefings for senior leadership, summarising operational metrics, market developments, and potential risks. The reports were thorough and analytically sound. Within weeks, however, executives stopped reading them. The reports arrived late in the evening, ran several pages long, and competed with a stream of other internal updates. When the system was redesigned to produce a short, structured summary before the weekly leadership meeting, the briefings became a regular input into discussions. The analysis itself remained unchanged. What changed was how it appeared within the decision process.

These examples illustrate a simple but often overlooked reality. AI systems operate inside human workflows, where timing, format, and attention determine whether insights actually influence decisions. For leaders, operational integration therefore becomes a design problem: when should the system surface a signal, who should see it first, and how should that signal appear so that it fits naturally into the work being done? Organisations that succeed with AI treat these questions as seriously as model performance, refining how intelligence enters everyday processes until the system becomes part of the workflow rather than an additional layer of effort.

Even when AI systems are well integrated into individual workflows, another challenge eventually appears. As organisations deploy more systems across functions, those systems begin interacting with one another. And that is where a new source of fragility begins to emerge.

5. Multi-AI Coordination

How do we manage interactions when multiple AI systems begin operating across the organisation?

We are still in the early days of AI adoption. Most organisations today are building early agents or experimenting with distinct AI workflows. But it is already clear where things are heading. Over time, businesses will deploy multiple AI systems across functions—agents that forecast demand, monitor risk, optimise logistics, draft communication, or coordinate operations. As these systems begin to interact, the challenge will no longer be what a single model decides, but what emerges when several systems start influencing one another.

Consider these hypothetical scenarios.

Handling environmental volatility

A retail company deploys an AI system to forecast demand and optimise inventory across its stores. Under normal conditions the model performs extremely well. It learns seasonal patterns, adjusts stock levels dynamically, and reduces excess inventory.

But during a period of tariff revisions that disrupt global supply chains, the system struggles to adapt. The model has been optimised for stable conditions, and when supply becomes volatile the recommendations begin amplifying the disruption rather than absorbing it. Inventory shortages appear in some regions while other warehouses hold excess stock.

The system is calibrated for efficiency. It becomes brittle when the environment changes.

AI models faceoff

In a logistics company, two AI systems are introduced independently. One optimises delivery routes across regional hubs. Another predicts weather disruptions and adjusts schedules accordingly.

Individually, both systems perform well. But once deployed together, unexpected interactions begin to appear. Weather adjustments trigger route changes that cascade through delivery schedules, creating delays across several hubs. Each system behaves as designed, yet their combined effect produces outcomes no one anticipated.

The issue is not a faulty model. It is the interaction between them.

As organisations deploy more AI systems, these kinds of interactions will become increasingly common. Failures will not necessarily originate inside a single system. They will emerge from the way systems influence one another. That is why leaders designing AI-enabled workflows will increasingly need to think about multi-AI coordination: how systems are allowed to interact, who observes their combined behaviour, and when a human should step in if the interaction begins producing unintended outcomes.

These scenarios are still emerging, but they point to a design challenge that organisations will soon have to address. As AI capabilities spread across functions, thinking about systems individually will not be enough. The interaction between them will become part of the operational architecture leaders need to manage.

6. Organisational Sensemaking

How do leaders help people understand their role in an AI-enabled organisation?

Across industries today, conversations about AI carry a strange mix of excitement and unease. Many professionals are astonished by what these systems can do. Tasks that once required hours of effort can now be completed in minutes. Analysis appears instantly. Drafts emerge fully formed.

At the same time, these capabilities raise uncomfortable questions about where people fit in the new workflows being created.

Consider these cases.

Risk of advocating change

During a consulting engagement, a senior manager listened carefully as I outlined how AI could simplify certain workflows and reduce friction. When the discussion ended, she nodded and said quietly, “I agree with everything you’re saying.”

Then she added something revealing.

“I wouldn’t be able to take this to my boss in the US the way it’s framed here. Right now he is making these decisions and his head is on the line. If I push something like this and the organisation reacts badly, the risk becomes mine. In a job market like this, I’m not sure I want to take that chance.”

The hesitation was not about the technology. It was about what advocating for change might mean personally.

Fear of obsolescence

When the system becomes good enough, do they still need me?

An architect friend of mine, someone who has spent years designing complex systems, recently admitted something similar in private conversation. As he worked on building AI-driven capabilities into the systems he was designing, he found himself wondering whether he was gradually building his own replacement.

“When the system becomes good enough,” he asked half-jokingly, “do they still need me?”

Neither of these reactions is unusual. In many organisations today, professionals are still trying to understand what working alongside increasingly capable systems will mean for their expertise, their influence, and their long-term role.

This is why organisational sensemaking matters.

Leaders often approach AI adoption as a technical or operational challenge, focusing on models, workflows, and efficiency gains. But the human interpretation of these systems can shape outcomes just as strongly. If people feel uncertain about where they fit, they may hesitate to engage fully with the systems being introduced.

The risk is subtle but real. Organisations may succeed in building powerful AI capabilities while simultaneously creating an environment where experienced professionals disengage or quietly move on.

That is precisely the moment when the organisation most needs thoughtful human judgment working alongside increasingly capable systems.

Helping teams make sense of this transition may not fully eliminate uncertainty or fear. But done well, it can give professionals a clearer sense of how their expertise continues to matter as these systems become part of everyday work.

AI will continue expanding inside organisations over the next decade. New models will emerge, agents will automate more tasks, and workflows will increasingly combine human and machine intelligence.

The harder question is whether organisations will build environments where their smartest people remain willing to question, interpret, and guide these systems. Without that, even powerful AI will lead to weaker decisions.

Founding Fuel is sustained by readers who value depth, context, and independent thinking.

If this essay helped you think more clearly, you may choose to support our work.

Founding Fuel is sustained by readers who value depth, context, and independent thinking.

If this essay helped you think more clearly, you may choose to support our work.

Join the conversation

Shrinath V

Founder, The Salient Advisory | Product & AI Strategy Advisor

Shrinath V. runs The Salient Advisory, where he helps leaders uncover blind spots and make smarter bets in the age of AI. With two decades of experience across global firms and startups, he advises product teams on strategy and execution.

He is also a long-time mentor with Google for Startups, where he has coached hundreds of founders, including many AI-led ventures, on the realities of selling to enterprise. Over the last year, his focus has been on AI adoption in practice—how leaders can move beyond experiments and bring AI into the real work of teams, decisions, and execution.

Shrinath is on LinkedIn and writes a blog, Blind Spots to Big Bets, on Substack.

Beyond the noise is the signal.

FF Insights: Sharpen your edge, Monday–Friday.

FF Life: Culture, ideas and perspectives you won't find elsewhere — Saturday.

Readers also liked

FF Life: Collect & Connect — managing information overload

How Obsidian is helping me save ideas and tonnes of information, and also retrieve it and see connections and patterns

N S Ramnath

Senior Editor | Founding Fuel

FF Life: Collect & Connect — managing information overload

How Obsidian is helping me save ideas and tonnes of information, and also retrieve it and see connections and patterns

Senior Editor | Founding Fuel

FF Insights #703: System blindness

July 27, 2022: Nagaland’s problem with women; Managing influences; The real struggle

Founding Fuel

FF Insights #703: System blindness

July 27, 2022: Nagaland’s problem with women; Managing influences; The real struggle

FF Insights #684: The enemy of creativity

June 30, 2022: Business and science; Managing Twitter; Cryptic clues

Founding Fuel

FF Insights #684: The enemy of creativity

June 30, 2022: Business and science; Managing Twitter; Cryptic clues

Explore more

Dive into other themes from our network.